|

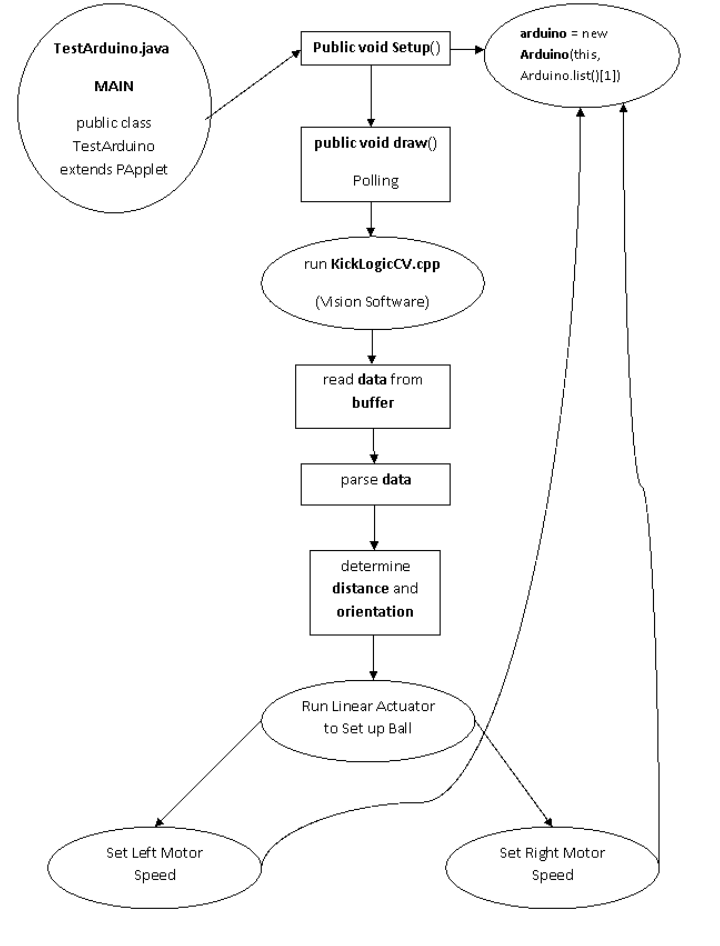

The software design of the first-touch trainer can be broken down into two main sections; the microcontroller software and the vision system. 1. The Microcontroller In order to provide software for the Arduino Deumilanove microcontroller, the programming interface was downloaded from the website. In order to quickly get a grasp of the language, sample files were tested on the board, and it was quickly identified that the programming language for the Arduino board is very similar to Java. The structure of the software is based on a continuous loop setting, waiting for inputs from the computer. Prior to activating the speed controllers and actuator relays, the computer must define the speed of the motors and indicate to begin launching the ball. Once the signals have been received via USB connection, the following launching sequence will occur. Launching Sequence

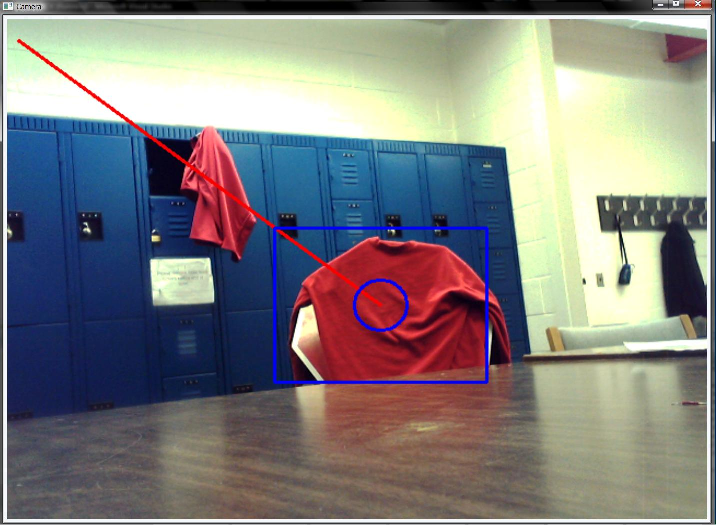

1. Begin moving the actuator in the forward direction 2. The Vision System In order to identify the location of a player on a field, an open-source library called OpenCV was used in conjunction with C++. The library provides easy interfacing with a webcam as well as possible filter options to work with the captured images. In order to determine the approximate position of a player on a field, several filters are used followed by an algorithm developed through trial and error. In addition to this, by averaging out the images over multiple frames, it is possible to get a more accurate position and distance of the player. In the current prototype, the webcam is set to identify players wearing a red jersey. Distance and Position Overview

1. Filter image for red colour  4. Run a mathematical algorithm to determine approximate distance and position of player Here is a sample of how the Computer Vision operates in real-time: - This is what the webcam sees: - This is what the Software algorithm generates and interprets launching distances from: For an overview to aid understanding of the Software interactions in the First Touch Machine (FTM), the following flowchart has been created:  |